Rage, rage, rage

Transference and Žižek on accelerationism

Title photo: Quinta de Regaleira, Portugal

1.

“Accelerationism” is a school of thought that first portrays an event as inevitable and then uses that inevitability to justify making the event come to pass ASAP. Why delay the inevitable?

I first heard it in reference to a better-known concept called the singularity, a prophesied point at which AI will gain and use the ability to make itself exponentially smarter. If this happens1 then its behavior will be hard to predict.

The belief that it’s inevitable has a built-in wobble in its proverbial Jenga tower. The stiff breeze, the drunk person’s arm, the kid hyped up on sugar, is seen in the fact that others have started claiming the term. Some in America have adopted it in reference not to AI but to a second Civil War. A subset of them have started damaging public infrastructure in an attempt to hasten it.

For the first type of accelerationist, this really puts a bee in their bonnet, or whatever they wear to Burning Man now. If there are multiple things on the horizon with the potential to transform and upend our lives — if one can imagine, for example, this second Civil War coming to pass, followed by a radical group taking power and making us live like the Amish, due to the evils wrought by technology on God’s wondrous creation — then one could say this technological singularity wasn’t as singular as advertised.

2.

Slavoj Žižek makes this point in The Dialectic of Dark Enlightenment:

The madness of the present moment resides in this peaceful coexistence of radically different options: Perhaps we will all perish in a nuclear war, but what really worries us when we read the news is cancel culture or populist excesses—and ultimately we don’t really care even about this as much as we do about our canceled flight.

On some level, perhaps, we know these three levels of existence are interconnected, but we continue to act as if they are not.

Accelerationists who focus on the singularity are typically financially comfortable, leaving them more shielded than most from having to think about what makes these forces interlock. This is the rope that hogties them. They, as much as anyone, can feel equally upset about Ticketmaster’s monopoly one day and collapsing ocean currents the next, but they, as much as anyone, don’t like to be shown, let alone taught about, the common roots connecting most of these mild-to-severe crises.

They’ve been known to ban talking about politics in startup offices. They want to focus on, to forecast, one thing at a time, and for all else they hang up a “Bless This Mess” sign and call it a day. They stare, rapt, at superintelligent AI as though it’s the eye of a hurricane, yet are allergic to thinking about the meteorological forces around it, behind it, underneath it: capitalism, imperialism, patriarchy. They turn, smirking, to people nearby, saying “I know where all this is headed.” Many play along or shrug it off, but some, like Žižek, look back at them the way a meterologist might after a lifetime of studying hurricanes. People like him (or bell hooks or David Graeber or take your pick) just understand this one’s sources and trajectory better. I think of Ron Swanson in a hardware store saying to an employee, “I know more than you.”

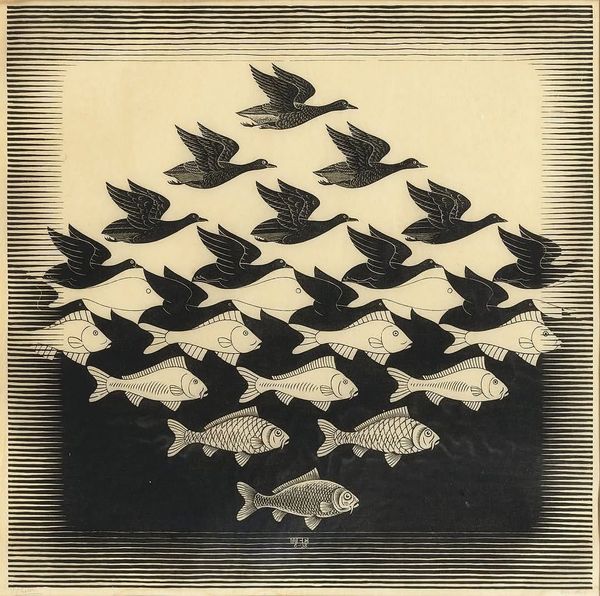

Žižek hammers this point home elsewhere in the essay in describing how accelerationist intelligentsia will react when the next slow-burning calamity reaches a critical point. Yuval Harari will write a pop history book. He’ll say “this was how the big wheel of history was always going to turn, don’t you know.” They would, in other words, engage in fervent revisionism about what we’re all seeing right now, right here, two inches in front of all of our faces: this tense coexistence of multiple crises, all of which venture capitalists hedge against with monetary bets. Accelerationists and their forefathers make up the mouth of a river, and though multiple tributaries join there, they marvel and fixate on one of them, pretending against all available evidence that the others’ courses are irrelevant to them or their particular singularity. This self-defeating shortsightedness makes their out-of-breath forecasts about AI-and-only-AI look like doomed attempts to bite one’s own teeth.

While it’s a riot watching them cosplay at Burning Man as shamans and techno-priests and oracles of the far future just to get stuck in the mud, it also symbolizes how they could very well drag billions of us down into the digital mud with them. They press on with a boldness that reminds one of Napoleon Dynamite dancing.

3.

We’re back to the desert. Once upon a time, being a rugged man of nature was the move: living off the land, being self-reliant. Then it was being a space-age man, a rocket man, a man of the future. We’re back to the desert, except now there are art cars and blood boys.

The current draw of primitivism among accelerationists comes from the idea that technology will let them ditch the morass of modern geopolitics. In escape pods they’ll blast off, into the desert, into space, into the Amazon Web Services BrainCloud, far away from the pesky land and resource disputes their ancestors left behind. “You’ll own nothing and you’ll be happy,” they say, not just because you’ll rent but because in the BrainCloud the mere concept of “owning” has been done away with.

This is a process called “deterritorialization,” or the removal of things that traditionally constitute our existence like land and the body. Accelerationists, in supporting it, are filming an impressive if unimaginative sequel to what their great, great, great, great grandfathers started when they alienated natives from their land, or what their grandfathers continued when they paved paradise to put up a parking lot. Bro thought he could escape from the problems his forefathers unleashed by acting just like them. Lol. Lmao. Like them, accelerationists “interpret” a divine plan, now revolving around godlike AI, that conveniently happens to name them as its worthy shepherds. Seeing how the term is used in practice tells a different story. The singularity is uttered as a holy mantra but wielded as a brand, a political tool, boxing out other possibilities, whipping up fuel for the hype mill, laundering less noble goals, justifying treating non-tech-adjacent humans like trash.

What if, in fleeing into the cloud so reactively, further deterritorializing ourselves, we just set ourselves up for more oscillation, slingshotting back the other way, wanting to go “back to the land,” wanting to “feel something real” again? That feeling, in all likelihood, will be rage. “Rage is the easy way back to a realm of feeling. It can serve as the perfect cover, masking feelings of fear and failure,” writes bell hooks on emotional life under patriarchy. In their rage at their ancestors’ political mess, the singularitarians aim to yeet all we know, every remnant of the human condition, as far away as possible; that rage will have to go somewhere, be transmuted somehow, and how it will do so after merging with AI is anybody’s guess.

4.

The other day I pulled up to an intersection where a man in a wheelchair held a sign asking for money. I imagined an alternate universe: I’m in SF, working at some AI company, a bit dumber about history and more irritable. I take the discomfort rising in me at this man’s condition (rooted in a combination of the city’s housing policies, the way we treat the addicted and the mentally ill, the man’s choices, and more) and I let it fester into rage. Maybe I throw that discomfort back at him with a snide comment. Or maybe I stay quiet and seethe, longing for a day when I can upload my consciousness and forever escape these confrontations with my and others’ discomfort. Maybe, when I get to the office, I grind extra hard, making my boss extra rich, because of it.

People transfer and let out such feelings on people around them, in front of them, baristas and flight attendants and others, because those people are right there, in front of them; the real problems are more like ghosts, in one’s mind and out there in the world. Some ghosts are real. Sometimes the people who talk about them, about witches and spirits and vampires, are right, but are also just transferring, just naming the wrong things. There are ghosts that will follow you into cyberspace if you let them.

Accelerationists would have you believe that these ghosts, these feelings persisting in us and transforming in us thanks to things like patriarchy, have no common origin with the singularity; or if they do, then accelerationists have nothing to do with that origin; or if that’s true, then those feelings remain irrelevant to the one inevitable thing barreling toward us. Wrong. While facts don’t care about your feelings, they don’t have to for feelings to be real. Human feelings and drives, writ large across communities and civilizations, rational or not, push history onward. Over time, groups of humans put a little energy over here and a little energy over there. We carve out winding subterranean labyrinths that connect vast and ghostly political drives. If you want to escape the results of them, fine. But look at them. Walk them, trace them back, at least a a single inch. Acquire the slightest idea of what you’re dealing with before you make several miles of predictions about them.

Bundled as tightly as it is with patriarchy, accelerationist thinking acts by grabbing our sublime awe at nature, the Other, the unknown — an unmanly emotion, i.e. one that isn’t rage — and transfers it. With clean, military, algorithmic efficiency, it refracts it into a bunch of worse things: fear, loathing, bottomless yearning.

“Rage, rage, rage against the dying of the light,” an accelerationist will say on the Lex Fridman podcast while surrounded by ring lights. They don’t address what a bummer it’ll be when they realize that, in the process, they’ve accidentally chained themselves, potentially for eternity, to a community of minds in digital space just as filled with rage as they are.

While AI can now auto-generate code, it’s far from having the unified control and motivation to improve itself. It may be doing some mathematically intricate regurgitating, but it remains regurgitating. More importantly, even if those models started mimicking the human brain instead of doing autocomplete, they still wouldn’t get there on their own. The “AI detection of fake news” problem shows this fact. AI can summarize news articles until the cows come home, but at some point, to make it trustworthy, you need to give it the ability to “be there.” You need to give it a model of the truth. The real facts of what happened. I don’t mean “be there” in the form of a drone with a camera; I mean the ability to provide an account of it with the kind of chatty background, the rich context derived from real experience, that only a 70-year-old neighbor can provide. AI can’t be trusted as a news source until it’s able to do that. Consider it an expansion pack for the Turing test, which we’ve passed. ↩